Introduction

Economic modeling techniques are widely used to provide a quantitative framework for economic evaluations that aim to inform policy decisions. Central to the validity of judgments that are based on the results of economic models is an assessment of the quality of the models themselves. Decision makers should have confidence that the quality of the models they are using is sufficiently robust to justify their decisions, and researchers developing models should demonstrate that their work meets acceptable quality standards.

Although assessment of the quality of models can be argued to be important from several different perspectives (in this article the main arguments are summarized), it is also necessary to consider what factors determine the quality of models and how quality might be assessed. To address these issues several approaches are considered. The article considers structure, data, and consistency as suggested by Philips et al. (2004).

In general, the quality of models depends on:

- Its fitness for purpose: relevance to the underlying research question;

- The methods by which data inputs to the model are combined (both of which relate to the quality of the model structure);

- The quality of the source studies from which data are taken; and

- The model’s internal and external consistency.

The quality of reporting of methods and results of models are also considered. Finally, key implications for both current practice and further research are highlighted.

Factors Determining Model Quality

Fitness For Purpose

Although there is no ‘right’ answer and analysts have to exercise judgment, it is important that the choices made by the analyst be explained. This includes the choice of the overall modeling approach. Models developed for economic evaluations can be broadly grouped into cohort models (e.g., decision trees and Markov chains) and individual sampling models (also known variously as patient-level simulations) (Briggs et al., 2006).

The choice of appropriate modeling approach depends on the research question posed. For example, with a treatment for an acute condition where the question is whether the intervention is ‘effective’ or ‘not effective’ a decision tree model may be sufficient, whereas chronic diseases, especially those characterized by periods of relapse and remission, can be modeled with Markov chains. When individual risks are contingent on previous events or pathways, individual sampling models may be more appropriate. Similarly, the evaluation of vaccination and screening programs for infectious diseases may be most appropriately modeled with more sophisticated individual sampling models (‘transmission dynamic models’) that take account of the changing risk of infection as the prevalence of disease changes following the introduction of an intervention as the population begins to gain the advantages of herd immunity. The modeling approach and structure must, therefore, reflect the research question, the properties of the evaluated technologies, the characteristics of the disease, and the treatment/intervention setting (Institute for Quality and Efficiency in Health care (IQWiG), 2009).

Structure

What is a ‘good’ structure? One answer is plausibility: Whether or not the patient pathways and assumptions represented by a model’s structure are plausible. All models by definition simplify reality. The task of the analyst is to design one that is sufficiently complex to reflect the nature and subtleties of the pathway being modeled yet simple enough to be (1) efficiently computed; (2) understood by the intended audience; and (3) capable of generating the necessary information for credible and authoritative guidance.

The degree of simplification and compromise is a matter of judgment, and hence there is no unique model structure that could be considered objectively ‘correct.’ This introduces a type of uncertainty within decision modeling termed structural uncertainty (also called model uncertainty) (Briggs et al., 2012). This is to be distinguished from parameter uncertainty. Aspects of structural uncertainty include the overall modeling approach; choice of comparator(s); scope; duration of treatment effect; the events handled by the model; and any statistical model used to estimate parameters, clinical uncertainty about the mechanism or pathway of care, and the absence of clinical (and other) evidence (Bojke et al., 2006).

The most common approach to handling structural uncertainties like the duration of treatment effect and the scope of the analysis is through the use of scenarios (Bojke et al., 2006). A ‘base case’ is commonly recommended, with alternative possible scenarios presented for the decision maker to judge the fit with their setting. Investigating the impact of more fundamental structural choices, such as alternative overall designs of the model or selection of different events such as wider or narrower scopes of costs and benefits may sometimes be possible: Alternative models could be constructed and tested to see if they yielded, or were likely to yield, different results. They could also be formally combined using a variant of Bayesian model averaging (Bojke et al., 2006). As there is a very large number of plausible potential model variants, such attempts will always have to be restricted to a reasonable subset of possible models.

Data Inputs To A Model

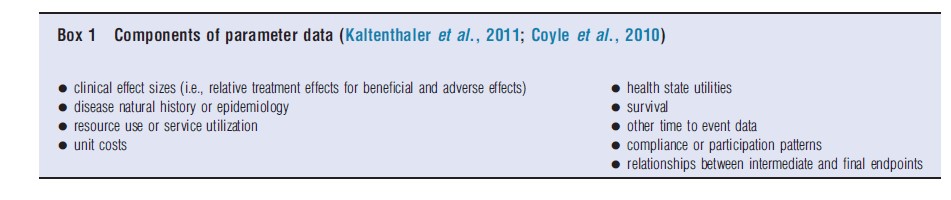

The quality of data used in model development can impact directly on the reliability of results. One way in which this can occur is through the impact of data quality on a model’s parameters. Each probability, cost, and outcome in an economic model is expressed in terms of a set of measurable, quantifiable characteristics, or parameters. In economic models, common types of parameters are probabilities, costs, relative treatment effects, and utilities (Briggs et al., 2006). Components of data needed to assign values to these parameters are summarized in Box 1 below.

These data are collected or derived from sources that may include empirical research studies, routine administrative databases, reference sources, and expert opinion. In principle, and often in practice, there is more than one potential source for each data component. For this reason, and because the results of economic models may depend in large part on choices between available sources of data, the processes of data identification, appraisal, selection, and use need to be explained and justified.

However, the use of data in model development is not restricted to the assigning of values to parameters. Data are also used to support every stage of model development, from establishing a conceptual understanding of the decision problem as noted above, through to the choice of sensitivity and uncertainty analysis (Briggs et al., 2006; Paisley, 2010). Thus, economic models have multiple information needs, requiring different types of data, drawn from various sources, and the factors that determine data quality encompass all of these uses, types, and sources.

Philips et al. (2004) identify four dimensions of quality regarding data: identification, modeling, incorporation, and assessment of uncertainty. Each dimension has a corresponding set of attributes of good practice, or factors that determine quality, and each attribute refers to the quality of processes used in the identification, appraisal, selection, or use of data at the different stages of the model development process.

Data quality is only one of two criteria to be used in identifying and selecting data in economic models. Before quality assessment, the available data also need to be assessed in terms of applicability or relevance. The initial assessment of applicability may result in a large proportion of potential data sources being rejected (Kaltenthaler et al., 2011).

Consistency

Assessing the consistency, or as some agencies have termed it validity (Canadian Agency for Drugs and Technologies in Health, 2006; Institute for Quality and Efficiency in Health care [IQWiG], 2009), of a model is a subject of debate and there is no unanimous agreement among experts (Philips et al., 2004; Institute for Quality and Efficiency in Health care [IQWiG], 2009 and Canadian Agency for Drugs and Technologies in Health, 2006 all offer different views). Regardless of how it is assessed it is useful to briefly consider what is meant by consistency. Philips et al. (2004) identified four aspects: internal consistency; external consistency; between model consistency; and predictive validity. Each of these has been briefly summarized.

Internal Consistency

Internal consistency requires that the mathematical logic of the model is consistent with the model specification and that data have been incorporated correctly, for example, that there are no errors in programming. One source of inconsistency in model specification relates to the conditioning of an action on an unobservable event. For example, in a model of cancer surveillance, decisions to change treatment may be made as soon as progression or recurrence of the cancer occurs despite the fact that in reality recurrence or progression would not be observed until some monitoring test has been performed.

Internal consistency may also be affected by asymmetries within the model. Such asymmetries may or may not represent errors but probably serve to highlight areas for further investigation. An example is when the physiological response of a patient to a particular event that occurs at several different points within a treatment pathway is modeled differently in corresponding areas of the model. It goes without saying that internal consistency will also be affected by the accuracy of the model programming and data entry. Errors in the model syntax or administrative errors (e.g., in labeling of data) are, at least initially, inevitable in the design and execution of any model. Proof reading and testing element by element are, therefore, a critical part of the design process. Ideally, these tasks would be completed by a researcher who is familiar with the decision question but who was not involved in the design and execution of the model and hence is less subject to bias and to uncritical acceptance of the often implicit assumptions that the original analysts may have made.

Other checks for internal consistency are to test formulae and equations separately before they are entered into the model to ensure that they are correctly expressed and can give the anticipated results. Sensitivity analyses using extreme or zero values can be used to identify apparently counterintuitive results. Likewise, examining the results of known scenarios, even ones that are unlikely or cannot occur in practice, can also be useful to identify counterintuitive results. The presence or absence of counterintuitive results does not necessarily indicate a problem (or lack of one) with internal consistency. However, when counterintuitive results are identified they should be explained, which will involve unpicking and examining the mechanisms that have led to them. A more elaborate test of internal consistency is to attempt to replicate the model in another software package and then comparing the results of both.

External Consistency

External consistency requires that the design and structure of the model makes sense to other experts in the field (a process that can be aided by presenting the model in pictorial form (Canadian Agency for Drugs and Technologies in Health, 2006)) and also that the results make intuitive sense. This is particularly the case where the results of the model run counter to expectations, and there may be temptation to disbelieve them. Given that the model is internally valid, it should then be structured such that a plausible narrative can be extracted explaining the logic of the results and why, if it departs from prior expectations, it does so.

Such face validity is only one aspect of external consistency. A further aspect is consistency of the results compared with other ‘independent’ data. However, if such independent data exist it would be more appropriate for them to be included in the model in the first place. This issue is not addressed in methods guides produced by some national HTA bodies (e.g., Institute for Quality and Efficiency in Health Care (IQWiG), 2009 and Canadian Agency for Drugs and Technologies in Health, 2006).

Between Model Consistency

This type of consistency is sometimes also included as an aspect of external consistency. It relates to a comparison of a model’s results with those of other models. The models to be compared should be developed independently but, because models might be developed at different times and in subtly different contexts, interpretation should proceed with caution as results may legitimately differ so that similarity of result does not necessarily confer confidence in the robustness or validity of either, nor does dissimilarity necessarily undermine confidence. Furthermore, even where two models are developed for the same purpose and at the same time, convergence of results might not occur because each model is the product of myriad judgments and assumptions, which might differ between research teams. This occurs in the multiple technology appraisals process conducted for National Institute for Health and Clinical Excellence (NICE) in England. Different stakeholders (usually manufacturers) can submit their own models as evidence to NICE. These models may also be used to inform the development of a further model by an independent academic group. The stakeholders will each have access to different data (as commercially sensitive data will not be shared with other manufacturers) with only the independent academic model having access to all data. Taken as a body these models can all be thought of as providing mutual sensitivity analyses enabling one to identify critical assumptions, important parameters, and the sensitive ranges for those parameters.

Predictive Validity

A final form of consistency identified by Philips et al. (2004) is predictive validity. They noted that some commentators argue that the predictions of a model should be compared with the results of a predictive study. On the contrary, they argue that it is inappropriate to expect a model to predict the future with such accuracy because it can only use the data and understanding that was available at the time it was constructed.

The reason for constructing a model in the first place is the lack of appropriate data from a single source with which to make a decision, so it inevitably represents a synthesis of current knowledge. Once such an appropriate prospective study has been undertaken, it should surely replace the model. However, such a comprehensive single study is most unlikely to exist in practice. Furthermore, the interventions under evaluation may be implemented under different conditions to those assumed in the model because of factors such as technological change. It is notable that consideration of such an element of consistency is not included in some of the more recent methods guides from Health Technology Assessment Agencies (National Institute for Health and Clinical Excellence, 2008; Institute for Quality and Efficiency in Health care (IQWiG), 2009 and Canadian Agency for Drugs and Technologies in Health, 2006).

Assessing The Quality Of Models

Structure

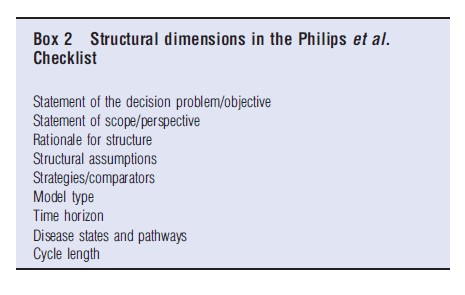

Several tools and checklists have been employed to assess the quality of economic evaluations in general, and of decision models in particular. The majority cover different aspects of the structure, data, and consistency of the model. Philips et al. (2004) proposed a framework by which models could be assessed. In common with other quality assessment tools, this framework comprises a series of criteria that capture methodological dimensions which analysts could be expected to have addressed in the conduct and reporting of their evaluations. These can be assessed with specific yes/no responses accompanied by supporting commentary. The nine structural dimensions identified by the Philips checklist are summarized in Box 2.

A clear statement of the decision problem is essential to define the entire evaluation: A vague question can either lead to a vague answer or one that fails to address precisely the decision problem at hand. Therefore, the objective of the analysis should be stated, along with a statement of who the primary decision maker is.

Philips et al. (2004) suggested that the scope (analytic perspective) of a decision model, which crucially affects which costs and outcomes are included in the analysis, should be stated and justified. Many decision-making organizations have adopted specific perspectives for their reference cases.

The structure of the model should reflect both the underlying disease process and service pathways under consideration. As noted above, the model type should be ‘appropriate’ to the decision question. Similarly, the time horizon of a model should be ‘appropriate,’ i.e., sufficient to capture all important differences in costs and outcomes between the options.

As again noted above, the model should have a degree of face validity in that its structure should make intuitive sense.

In principle, every ‘feasible and practical’ alternative treatment strategy should be considered rather than only a subset of comparators. This is because cost-effectiveness is always a relative concept (i.e., a treatment is considered cost-effective relative to another treatment). Therefore, exclusion of relevant comparators may lead to erroneous conclusions being drawn about which intervention(s) to invest in or disinvest from. Current practice should be included among comparators.

Finally, as with the choice of model structure, the disease states/pathways included and cycle length in a decision model with discrete time intervals should ideally reflect the underlying biology of a disease as well as the impacts of interventions.

Data

Most published guidelines on assessment of quality of data in economic models focus on the transparency of reporting of methods and results and on the quality of methods to identify data to populate model parameters. They do not assess methodological quality per se, nor do they consider the wider uses of data in the model development process (Kaltenthaler et al., 2011). A key reason for the general lack of consensus on standards for quality assessment with respect to data is that the scope of data relevant to an economic model is not entirely predefined, but emerges in the course of the iterative model development process. As such there is no objectively ‘right’ or ‘wrong’ set of data for use to inform the model development process, but rather an interpretation of what data is relevant to the decision problem at hand (Kaltenthaler et al., 2011).

The process of identifying data to inform model development is necessarily an iterative, emergent, and nonlinear process rather than a series of discrete information retrieval activities, such that the searches conducted and the sequence in which they are conducted will legitimately differ between models (Kaltenthaler et al., 2011). Although this process can still be systematic and explicit, it is also highly specific to each individual model. Given these issues, recently published guidelines (Kaltenthaler et al., 2011) have recommended approaches to data identification that focus on:

- Maximizing the rate of return of potentially relevant data;

- Judging when to stop searching because ‘sufficient’ data have been identified, such that further efforts to identify additional relevant data would be unlikely to improve the analysis; and

- Prioritizing key information needs and paying particular attention to identifying data for those parameters to which the results of a model are particularly sensitive. (There is a potential Catch 22 here in that it is unclear a priori which data points the model will be sensitive too. In practice, the analyst relies on experience from other similar models, although a modeling solution is possible in that running a model with dummy data can inform this as well.)

Coyle et al. (2010) proposed a hierarchy to rank the quality of sources of the different components of data used to populate model parameters. This hierarchy highlights variation between different components of data in which sources may be regarded as ‘high quality,’ emphasizing sources that in principle generate causal inferences with high internal validity for clinical effect-sizes, and sources that are in principle highly applicable to the specific decision problem at hand for all other components.

Although such hierarchies can, with refinement, offer a useful tool for the quality assessment of data sources, they do not incorporate assessment of specific dimensions of quality, or risk of bias, within each source (Kaltenthaler et al., 2011). Various instruments and checklists have been developed for assessing risk of bias in both randomized controlled trials and nonrandomized studies of effects that may be used to populate parameters in economic models. Perhaps, the most prominent is the Cochrane Risk-of-Bias tool (Higgins et al., 2011). However, these instruments are not equally applicable to all the diverse potential sources of data for model development. The grading of recommendations assessment, development and evaluation (GRADE) system offers more promise in this respect. It provides a consistent framework and a set of criteria for rating the quality of evidence collected (or derived) from all potential sources for all data for populating model parameters. Sources include both research- and nonresearch-based sources (e.g., national disease registers, claims, prescriptions or hospital activity databases, or standard reference sources such as drug formularies or collected volumes of unit costs) (Brunetti et al., 2013). Consistent with the hierarchy proposed by Coyle et al. (2010), the GRADE system allows flexibility in the quality assessment process to include additional considerations alongside internal validity. These include, as part of the ‘indirectness’ criterion in GRADE, the applicability of data components to the specific decision problem at hand.

Reporting Methods And Results

Lack of transparency in the reporting of methods and results of economic evaluations undermines their credibility and, in turn, threatens the appropriate use of evidence for cost-effectiveness in decision-making. The development and use of increasingly sophisticated economic modeling techniques may increase the complexity of economic models at the expense of transparency. It is, therefore, important for analysts to strike an appropriate balance between scientific rigor, complexity, and transparency, to reduce the ‘black box’ perception of economic models and provide decision-makers with an ability to understand intuitively ‘what goes in’ and ‘what comes out.’ This requires analysts to use and record formal, replicable approaches at each stage of the model development process and report these approaches in a transparent and reproducible way (Cooper et al., 2007).

In the quality assessment checklist based on their 2004 review of published good practice guidelines in economic modeling, Phillips et al. found that 19 of 42 identified attributes of good practice and 33 of 57 questions for critical appraisal related to the transparency of reporting and the explicitness of the justification of methods. These attributes and questions are concerned with transparency and justification of choices made at every stage of the model development process. The key principle underlying checklists for economic models is that decision-makers should be able to reach a judgment easily, based on information in the published report alone, on each of the following:

- Whether analysts have invested sufficient effort to identify an acceptable set of data to inform each stage of the model development process;

- That sources of data have not been identified serendipitously, opportunistically, or preferentially; and

- Whether any choices between alternative sources of data, assumptions (in the absence of data), or adaptations or extrapolations of existing data, are explained and justified with respect to the specific decision problem at hand (Kaltenthaler et al., 2011).

Implications For Practice And Research

As economic models are increasingly used to inform policy and practice, concerns about whether or not their quality is ‘good enough’ for this purpose come to the fore. Assessing quality is important for the credibility of the whole process but it can be time consuming. Sufficient time must be planned for this work to be undertaken. Given the sometimes pressing time restrictions that modelers face, policy makers need to be aware that a full assessment of all aspects of quality may not be possible. Analysts in such circumstances have to be clear about what they have and have not done. They can help ensure that quality has been maximized within the constraints of the research process by referring to existing checklists described above for assessing the quality of models in general or of those designed for specific circumstances and stakeholders. Because no model can be perfect, any limitations should be explored and if they cannot be rectified or improved then the limitation should be highlighted in the study report along with an analysis of its consequences, the direction, and possible size of any bias and some cautions about external validity.

Bibliography:

- Bojke L., Claxton K., Palmer S., Sculpher M. (2000). Defining and characterising structural uncertainty in decision analytic models. CHE Research Paper 9. York: Centre for Health Economics, University of York.

- Briggs, A., Sculpher, M. and Claxton, K. (2006). Chapter 4: Making decision models probabilistic. In Briggs, A., Sculpher, M. and Claxton, K. (eds.) Decision modelling for health economic evaluation, pp. 77–120. Oxford: Oxford University Press.

- Briggs, A., Weinstein, M., Fenwick, E., et al. (2012). Model parameter estimation and uncertainty: A report of the ISPOR-SMDM modeling good research practices task force-6. Value in Health 15, 835–842.

- Brunetti, M., Shemilt, I., Pregno, S., et al. (2013). GRADE guidelines: 10. Special challenges – quality of evidence for resource use. Journal of Clinical Epidemiology 66(2), 140–150.

- Canadian Agency for Drugs and Technologies in Health (2006). Guidelines for the evaluation of health technologies, 3rd ed. Ottawa: Canadian Agency for Drugs and Technologies in Health.

- Cooper, N. J., Sutton, A. J., Ades, A. E., Paisley, S. and Jones, D. R. (2007). Use of evidence in economic decision models: Practical issues and methodological challenges. Health Economics 16(12), 1277–1286.

- Coyle, D., Lee, K. M. and Cooper, N. J. (2010). Chapter 9: Use of evidence in decision models. In Shemilt, I., Mugford, M., Vale, L., Marsh, K. and Donaldson, C. (eds.) Evidence-based decisions and economics: health care, social welfare, education and criminal justice, pp. 106–113. Oxford: WileyBlackwell.

- Higgins, J. P. T., Altman, D. G. and Sterne, J. A. C. (eds.) (2011). Chapter 8: Assessing risk of bias in included studies. In Higgins, J. P. T. and Green, S. (eds.) Cochrane handbook for systematic reviews of interventions, pp. 188–242. Version 5.1.0. The Cochrane Collaboration. Available at: www.cochranehandbook.org (accessed 28.03.13).

- Institute for Quality and Efficiency in Health care (IQWiG). (2009). Working paper Modelling. Version 1.0. Cologne: Institute for Quality and Efficiency in Health care.

- Kaltenthaler E., Tappenden P., Paisley S., Squires H. (2011). NICE DSU Technical Support Document 13: Identifying and reviewing evidence to inform the conceptualisation and population of cost-effectiveness models. Sheffield: NICE Decision Support Unit, School of Health and Related Research. Available at: https://pubmed.ncbi.nlm.nih.gov/28481494/.

- National Institute for Health and Clinical Excellence (2008). Guide to the methods of technology appraisal. London: National Institute for Health and Clinical Excellence.

- Paisley, S. (2010). Classification of evidence used in decision-analytic models of cost-effectiveness: A content analysis of published reports. International Journal of Technology Assessment in Health Care 26(4), 458–462.

- Philips, Z., Ginnelly, L., Sculpher, M., et al. (2004). Review of guidelines for good practice in decision-analytic modeling in health technology assessment. Health Technology Assessment 8(36), 1–172.

- Deeks, J. J., Dinnes, J., D’Amico, R., et al. (2003). Evaluating non-randomized intervention studies. Health Technology Assessment 7, 27.

- National Institute for Health and Clinical Excellence (2009). Methods for the development of NICE public health guidance, 2nd ed. London: National Institute for Health and Clinical Excellence.

- Philips, Z., Bojke, L., Sculpher, M., Claxton, K. and Golder, S. (2006). Good practice guidelines for decision-analytic modelling in health technology assessment: A review and consolidation of quality assessment. PharmacoEconomics 24(4), 355–371.